Your DAM Sees a Beach. We See What It Means.

Most DAMs tell you what's in your photos. DAMdaryl tells you what they mean. Here's why that distinction is costing your team hours and your brand coherence.

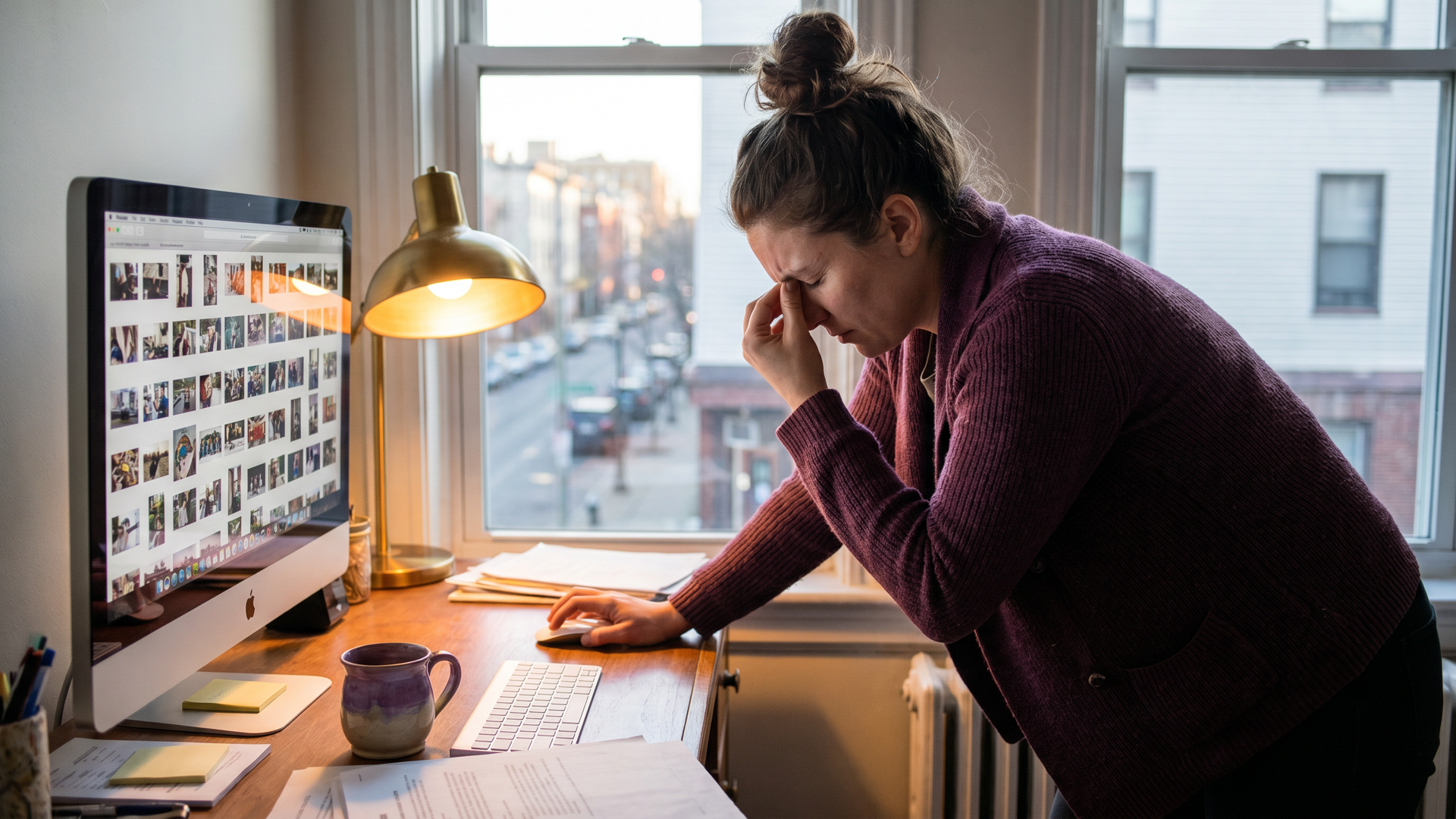

It's 2 PM on a Tuesday. You need a photo for tomorrow's campaign post. Something warm, aspirational, real. Not staged. You open your DAM, type "couple outdoor," and get 847 results sorted by upload date.

You scroll for twelve minutes. You know the photo exists. You saw it three months ago in a batch from the agency. It had the right energy. Your DAM has no idea what you're talking about.

This is the problem. Not file storage. Not organization. The problem is that most DAMs read assets like file inspectors. They see what's in the frame. They don't see what the frame means.

What every DAM gets right (and where they stop)

Digital asset management tools are good at one thing: cataloging. Object recognition, auto-tagging, smart search by visual element. Feed a photo of a beach into most modern DAMs and they'll tag it: sand, water, sky, people, sunset. Accurate. Thorough. Completely useless when you're trying to find an asset that conveys "aspirational warmth without looking like a stock photo."

The category isn't broken because it's unsophisticated. It's broken because it optimized for the wrong question.

The right question isn't "what's in this asset?" It's "what does this asset do to the person who sees it?"

A photo of two people on a beach at golden hour might mean:

- Nostalgia, if the tones are warm and slightly desaturated

- Aspiration, if the composition is clean and the subjects are moving toward something

- Intimacy, if the crop is tight and the subjects face each other

- Lifestyle brand confidence, if the product appears naturally in frame

Same elements. Completely different meaning. Object detection sees a beach. Brand interpretation sees what that beach means to your customer.

Other DAMs index what's IN an asset. DAMdaryl indexes what the asset DOES.

How most tools search (and why it fails marketers)

Type "beach sunset couple" into a legacy DAM and it matches your keywords to its tags. Works fine if your search language perfectly mirrors the tagger's language. Fails completely when it doesn't.

Creative directors don't search "couple at sunset." They search "aspirational warmth, not staged." A content manager hunting for a product shot doesn't type "skincare bottle on table." They describe "premium, clinical, minimal, Q4 gifting energy."

That's the vocabulary of intent. Most tools don't speak it.

They were built for IT administrators and creative librarians who care about organization, taxonomy, version control, rights management. That's real work. It's just not the work of someone trying to find the right photo for tomorrow's post.

The DAM industry shipped a wave of AI features in the last eighteen months. Better auto-tagging, smarter search, bulk organization. All of it got better at answering the original question: what's in this asset?

Nobody shipped a tool that answers the question marketers actually have.

Most DAMs identify, tag, and organize. We interpret.

What we built instead

DAMdaryl connects your content brief to your asset library.

Paste a social caption. Paste a campaign brief. Describe the moment you're trying to create. DAMdaryl reads that against every indexed asset in your library and returns ranked recommendations with reasoning.

Not "here are assets tagged beach." Here are the seven assets from your library where the composition, color temperature, subject energy, and visual tone best match the brand attributes your caption is activating.

The reasoning matters. A recommendation without an explanation is a guess with confidence. We surface why each asset ranked where it did. "This photo scores 87 for brand match because the composition signals aspiration, the warm-to-cool balance matches your Q4 campaign tone palette, and the subject's posture activates independence without isolation."

That's what a creative director reading your library would tell you. We built a system that does it at scale.

What intent matching actually looks like

A beta user at a mid-size B2B brand pasted this caption last month: "The work doesn't stop when the meeting ends. Neither should your tools."

They wanted something that read as "focus, momentum, professional but real." Their library had 4,200 assets. Their old system would have returned everything tagged "office" or "work."

DAMdaryl returned eleven assets ranked by brand match. The top three: a candid shot of someone reviewing documents at a coffee shop window (87/100), a tight crop of hands on a laptop with ambient lighting (84/100), and a wide desk shot with warm evening light (79/100). Each with a sentence of reasoning about why it matched the caption's register.

They used the first one. It was from a shoot eighteen months ago they'd stopped thinking about.

That's the retrieval problem solved. Not better tags. Better interpretation.

What we learned from 7,000 assets

We've indexed over 7,000 brand assets across the beta. A few things that volume taught us.

First: most brand libraries are effectively misfiled. Not because people are disorganized. Because the file-naming system made sense to whoever organized it in 2019 and to no one else since. final_v3_REAL.jpg is not an edge case. It's the median.

Second: the best assets are rarely the most recent ones. Brands over-rely on the last agency batch because that's what they can find. There are usually strong, underused assets from two or three campaigns back that nobody touches because nobody can find them. The library has depth. The retrieval system doesn't.

Third: the search failure compounds. Every time someone can't find the right asset, they either settle for something misaligned or go back to the agency for a new shoot. Settling costs brand coherence. Going back to the agency costs real money.

DAMs were supposed to solve this. They solved the storage problem. They didn't solve the retrieval problem.

The gap nobody talks about

Here's the sentence that frames the whole problem: the people who buy DAMs are not the people who use them.

IT leads, procurement, ops managers. They evaluate on storage, governance, permissions, integrations. They hand it over to the marketing team and the marketing team learns to work around it.

The workaround is search by file name. Approximate guesses at the folder where something might live. The Slack channel where someone asks "does anyone remember that photo from the Iceland shoot?" Someone knows. Someone always knows. That's a system. It's just a system built on people instead of software.

DAMdaryl is built for the people who use the library, not the people who buy it.

The business case

For the marketers reading this: none of that needed an ROI argument. You know the hourly cost of bad asset search.

For their managers: the average marketing team at a 50-100 person B2B brand has between 3,000 and 15,000 brand assets. A legacy DAM or Drive folder retrieves by file name and manual tag. DAMdaryl retrieves by intent. If your team spends four hours a week hunting for the right asset, that's 200 hours a year per person. At any loaded hourly rate, the math isn't interesting. It's decisive.

The secondary cost is brand coherence. Teams that can't find the right asset use the available one. The available one is usually wrong. Brand tone drift isn't a design problem. It's a retrieval problem. A brand match score of 87 beats a brand match score of 61. Over a hundred posts a year, the delta compounds.

Try it

DAMdaryl is in beta. We're working with small-to-mid marketing teams that have an asset chaos problem they've been tolerating too long.

You connect your existing storage. Drive, Dropbox, or whatever's been accumulating. We index. You paste your first caption and watch what comes back.

Book a beta demo at damdaryl.ai.

Other DAMs index what's in your assets.

We index what they do.